Q: What do an iPhone X and a 50 year old CRT have in common?

A: Each has a portion of the display that you can’t effectively use (though for entirely different reasons).

History lesson

CRT screens are curved, they don’t have perfect square corners, the beam overscans a bit, and many did not have variable focus to keep the beam sharp across the entire screen. All of these factors combine to cause problems at the edge of the screen, but just how much varies from screen to screen. TV standards resolve this by defining safe zones guaranteed to be usable on most any TV.

LCDs and other modern display technologies don’t have the same limitations as CRTs, but to maintain compatibility with content made for CRTs, many TVs will take the input and overscan it and then crop it, causing the exact same problem (and preventing digital sources from being displayed at 1:1 to boot). Depending on your TV, you might be able to reduce or disable the effect when playing games (sometimes it depends on the input being used, with VGA or DVI being displayed as-is, and HDMI being overscanned), but you can’t count on that working for your users.

Safe zones and games

These safe zones have been an issue that console developers have had to deal with since the beginning, but it’s relatively new to mobile developers as phones add notches and wrap-around screens. As long as you paint within the lines, everyone will be able to see it.

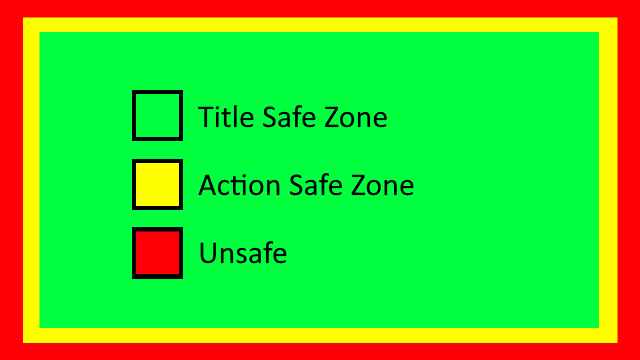

Typically the safe zones are split up into two concepts:

- Title safe area – This is where text is fully readable, anything important to the player or viewer should be displayed here.

- Action safe area – This is at least as large as the title safe area, and may be larger. The area between this and the title safe area is still probably visible, so you’d want things like background graphics to still extend here.

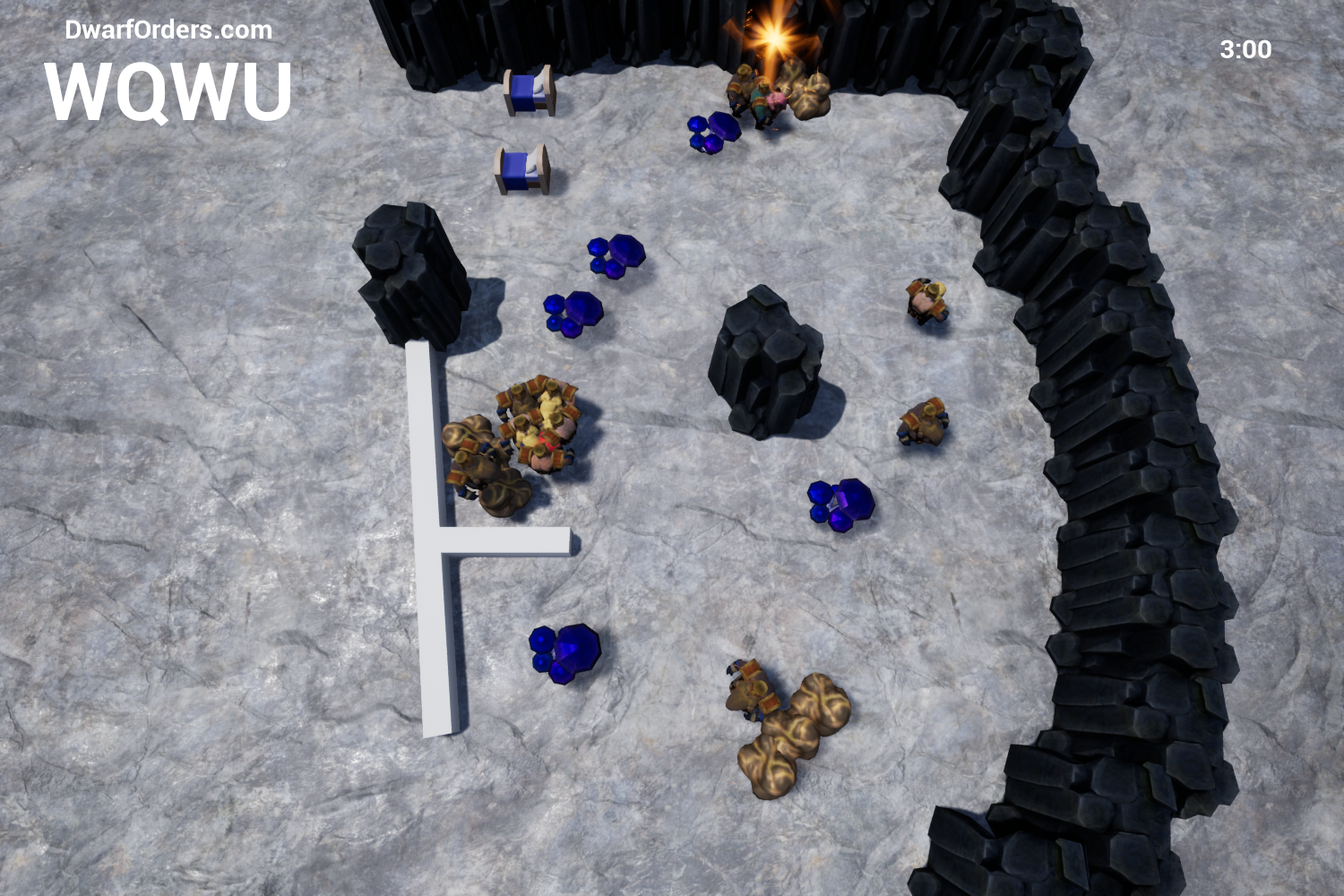

For TV the distinction matters, but for the most part in games it’s unimportant. Make sure all UI elements stay within the title safe zone and you’re probably OK with all of the 3D elements filling the full screen. The only time when you might want to distinguish between them (assuming the platform you’re on even makes the distinction) is if you have a fixed camera view: you don’t want the player (or threats to the player) to travel beyond the action safe zone (because they might become invisible in the overscan), but clamping them all the way to the title safe zone might feel a little bit conservative.

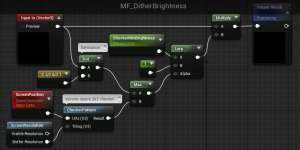

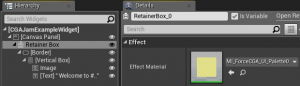

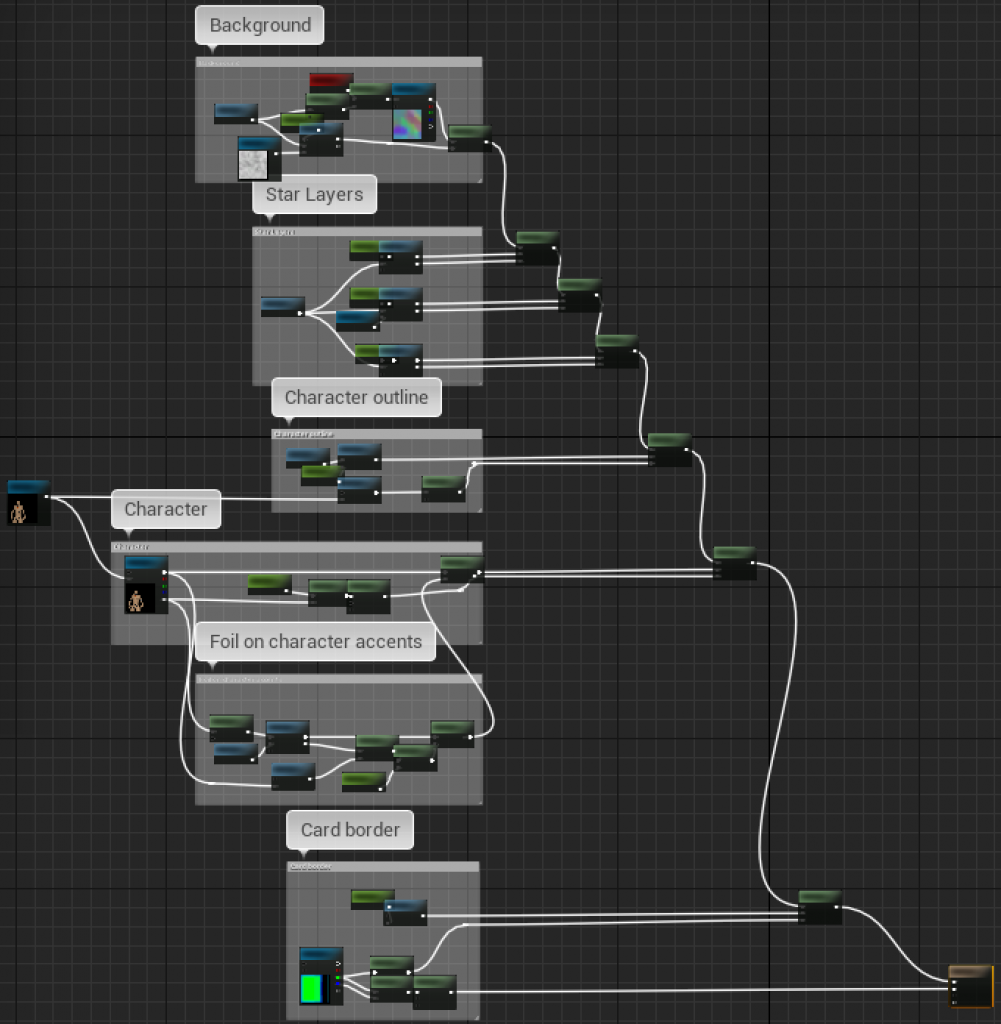

How to paint within the lines in UE4

If you’re using UMG for all of your UI, USafeZone is your friend. It’s a container panel that should be used to wrap your HUD or the important aspects of your front end UI, and it lets you pick if you want it to adjust to match the title or action safe zones. You don’t need a bunch of them, just one at the top level (typically in the widget you directly add to the viewport overlay stack).

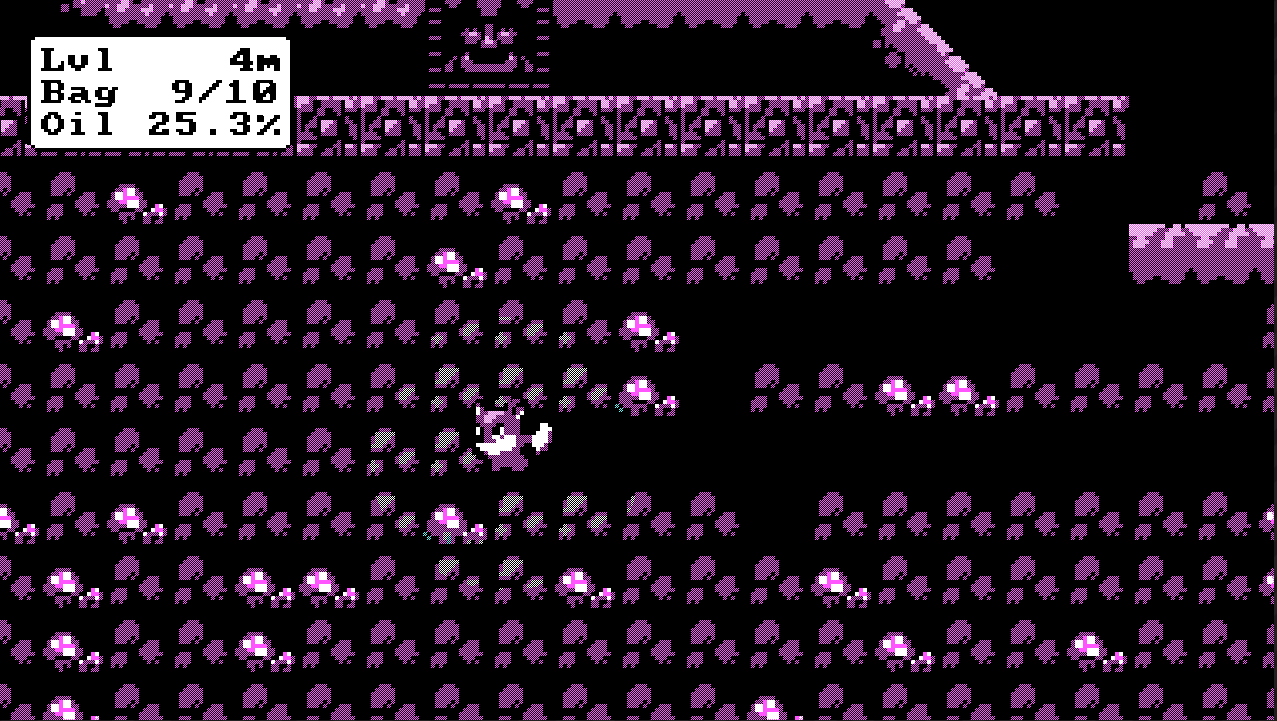

USafeZone uses FDisplayMetrics to query the safe zone for the current platform, in case you ever need to directly access the safe zone information in C++. On platforms that have no native safe zones (like PCs), you can use the console commands r.DebugSafeZone.TitleRatio and r.DebugActionZone.ActionRatio to simulate one. These both range from 0..1, and default to 1 indicating 100% of the display is safe. A typical test value would be around 0.9 but an important caveat is that safe zones were originally designed for full screen use, so these percentages are only accurate if you test in fullscreen. For quick spot checks it doesn’t matter, as you’re just verifying that all the important stuff moved inwards, but when fine tuning layouts you probably want to see something representative by going to windowed fullscreen or at least maximizing the window.

You can also visualize the current safe zone (on any platform) using r.DebugSafeZone.Mode, which can draw a translucent border on the screen:

0: Do not display the safe zone overlay

1: Display the overlay for the title safe zone

2: Display the overlay for the action safe zone.

Not using Unreal?

I can’t help you for consoles without breaking NDAs, check the vendor documentation for what the certification requirements are and how to query the current safe zone.

For iOS, it’s a new concept starting in iOS 11: UIEdgeInsets is an anchor term to search for. I’m not sure if Android has an equivalent API yet, but it’s going to be important soon.